What Are Transformers? The Architecture Powering Modern AI Explained

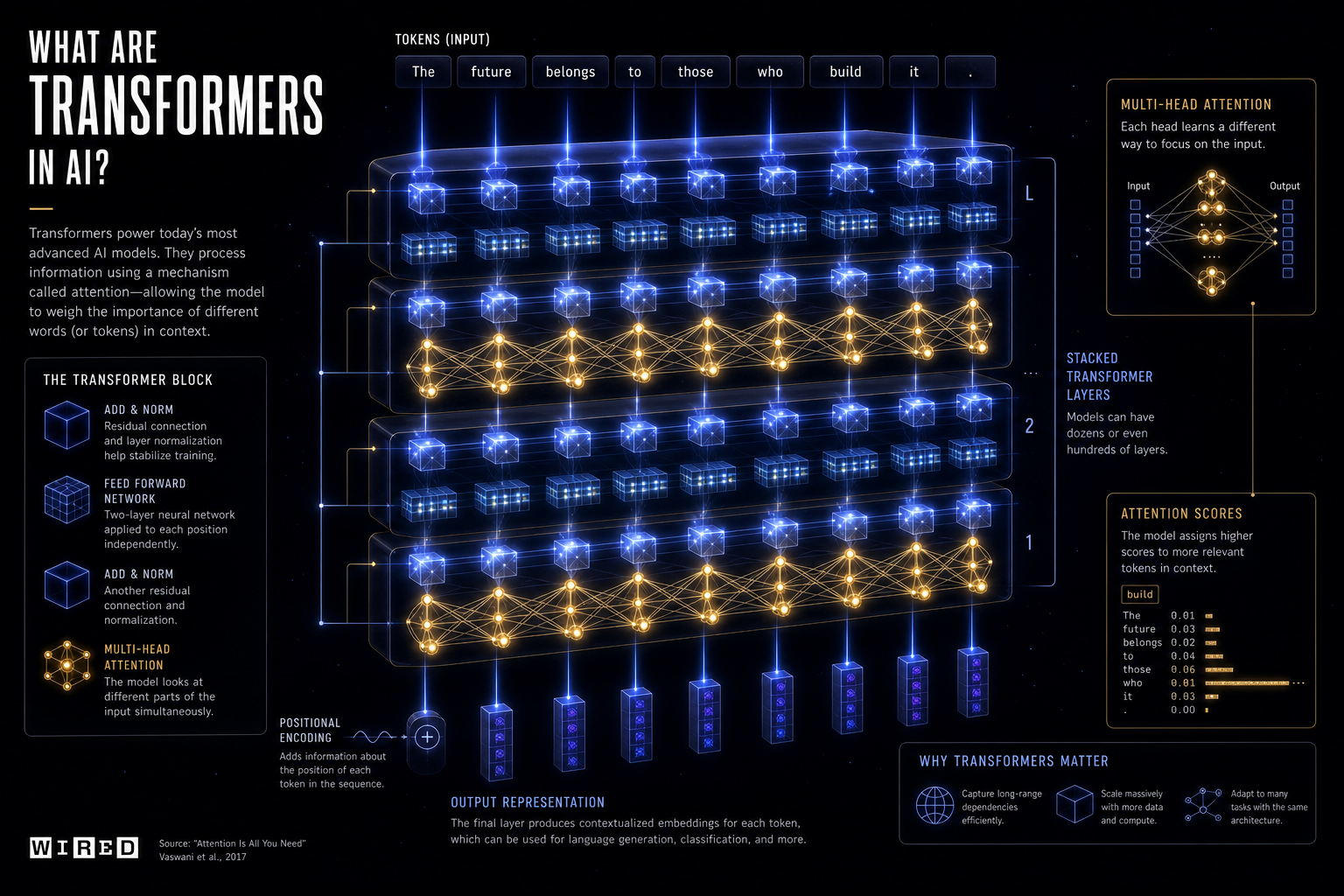

In 2017, a paper titled Attention Is All You Need introduced the transformer architecture. Eight years later, it underpins virtually every major AI model in existence. Here is what it actually does — and why it matters.

The core idea: attention

The key innovation of transformers is the attention mechanism, which allows a model to weigh the importance of different parts of its input when generating each output token.