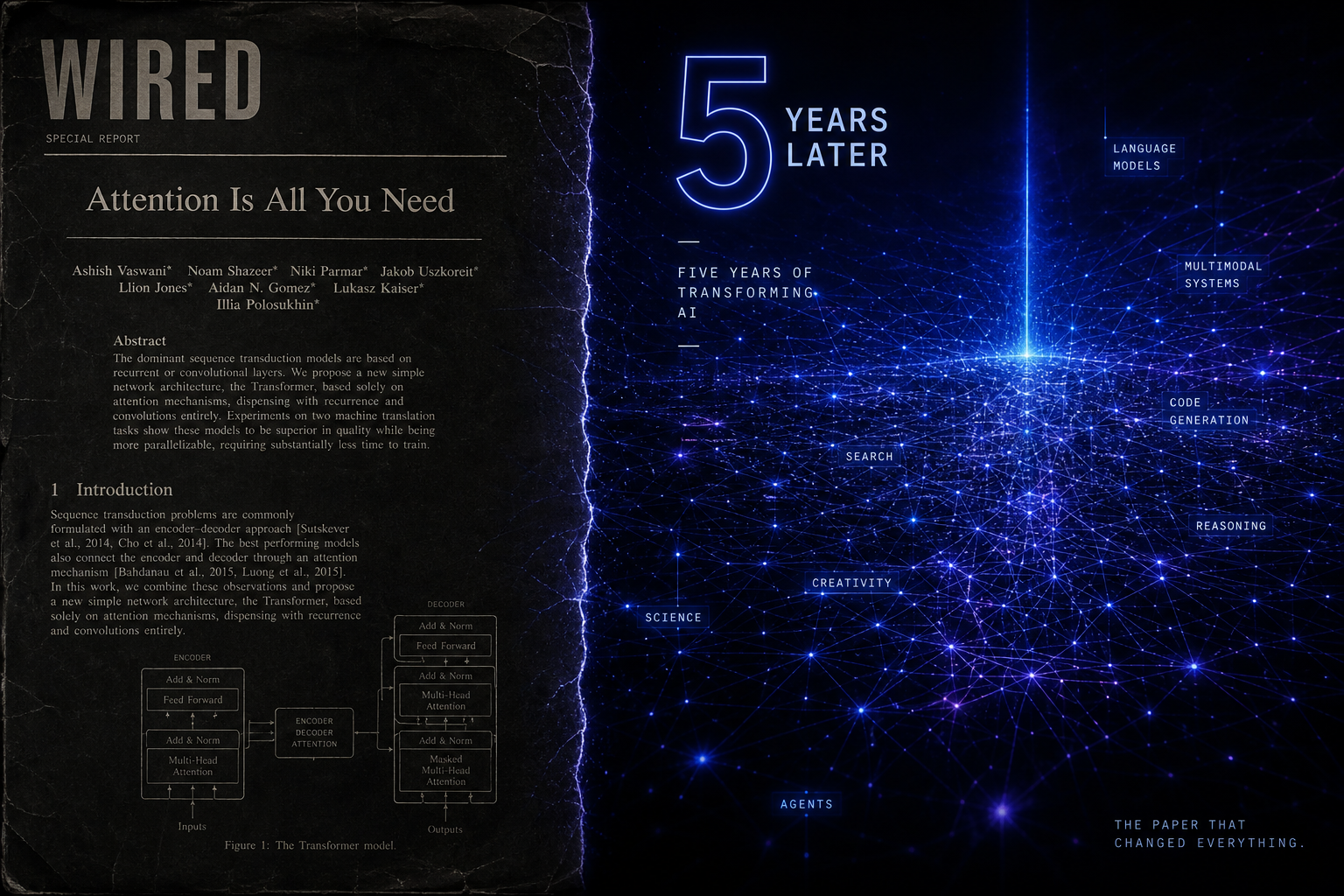

Attention Is All You Need — Five Years Later: What the Transformer Paper Got Right, Wrong, and Left Unsaid

In June 2017, eight researchers at Google Brain published a 15-page paper titled Attention Is All You Need. It introduced the Transformer architecture — built entirely on self-attention, discarding recurrent layers that had dominated sequence modelling for a decade. It became the most-cited paper in AI history, spawning GPT, BERT, T5, ViT, DALL-E, Stable Diffusion, and AlphaFold 2.

What the Paper Said

Before Transformers, LSTMs and GRUs processed tokens one at a time. The vanishing gradient problem meant distant tokens lost influence. The Transformer solution: attend to all tokens simultaneously. For each position, compute a weighted sum of all other positions where weights reflect relevance. The formula Attention(Q,K,V) = softmax(QK^T / sqrt(dk))V runs every major AI system today.

What the Paper Got Right

Parallelisation. Because attention processes all positions at once, Transformers are massively GPU-parallelisable. This enabled training at scales impossible with LSTMs.

Long-range dependencies. Attention captures distant token relationships far more reliably than recurrence.

Universal generalisation. Images as patches (ViT), proteins across residue pairs (AlphaFold 2), audio, video, molecular graphs — the same mechanism handles all of them.

What the Paper Got Wrong

Positional encodings. Fixed sinusoidal encodings were quickly replaced by learned relative encodings (RoPE, ALiBi) that generalise better to longer sequences.

Quadratic attention complexity. Standard attention scales O(n squared) with sequence length. For 2017 translation sentences this was fine. For million-token contexts in 2026, it is a fundamental bottleneck. Flash Attention, sparse attention, and state-space models (Mamba, RWKV) are partial solutions still being refined.

Interpretability overconfidence. The paper showed attention heatmaps suggesting heads learned interpretable roles. Years of research later, Anthropic mechanistic interpretability work has shown the picture is far more complex and attention weights alone are misleading.

What Nobody Noticed at the Time

The deepest discovery was not the attention mechanism — it was the accidental proof that scale is a first-class hyperparameter. The 2017 model had 213M parameters. Within 36 months GPT-3 had 175 billion. Emergent capabilities — abilities that appear suddenly at scale with no theoretical prediction — were not in the paper and remain only partially understood in 2026.

Attention Is All You Need was the opening sentence of a story nobody has finished writing yet.